In: 2016 9th International Conference on Human System Interactions (HSI), pp. Kruusamäe, K., Pryor, M.: High-precision telerobot with human-centered variable perspective and scalable gestural interface. Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization (2014) Khokhlova, M., Migniot, C., Dipanda, A.: 3D point cloud descriptor for posture recognition. In: 2004 IEEE Region 10 Conference TENCON 2004, pp. Kevin, N.Y.Y., Ranganath, S., Ghosh, D.: Trajectory modeling in gesture recognition using cybergloves/sup/spl reg//and magnetic trackers. Kaluri, R., CH, P.R.: Optimized feature extraction for precise sign gesture recognition using self-improved genetic algorithm. Howard, J., Gugger, S.: Fastai: a layered API for deep learning. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. HasanPour, S.H., Rouhani, M., Fayyaz, M., Sabokrou, M.: Lets keep it simple, using simple architectures to outperform deeper and more complex architectures. In: 2017 IEEE International Symposium on Robotics and Intelligent Sensors (IRIS), pp. Gunawardane, P., Medagedara, N.T.: Comparison of hand gesture inputs of leap motion controller & data glove in to a soft finger. In: 2017 30th SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), pp. Gatto, B.B., dos Santos, E.M., Da Silva, W.S.: Orthogonal hankel subspaces for applications in gesture recognition. Rev.) 38(4), 461–482 (2008)įang, L., et al.: Deep learning-based point-scanning super-resolution imaging. 1–9 (2016)ĭipietro, L., Sabatini, A.M., Dario, P.: A survey of glove-based systems and their applications. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. The Eurographics Association (2019)ĭe Smedt, Q., Wannous, H., Vandeborre, J.P.: Skeleton-based dynamic hand gesture recognition. In: Eurographics Workshop on 3D Object Retrieval. īourke, A., Obrien, J., Lyons, G.: Evaluation of a threshold-based tri-axial accelerometer fall detection algorithm. IEEE (2017)īoulahia, S.Y., Anquetil, E., Multon, F., Kulpa, R.: Leap motion dynamic hand gesture (lmdhg) database (2017). In: 2017 Seventh International Conference on Image Processing Theory, Tools and Applications (IPTA), pp. 23(3), 257–267 (2001)īoulahia, S.Y., Anquetil, E., Multon, F., Kulpa, R.: Dynamic hand gesture recognition based on 3D pattern assembled trajectories.

IEEE (2011)īobick, A.F., Davis, J.W.: The recognition of human movement using temporal templates. In: 2011 IEEE Workshop on Applications of Computer Vision (WACV), pp. Van den Bergh, M., Van Gool, L.: Combining RGB and TOF cameras for real-time 3D hand gesture interaction. In: 2014 IEEE-RAS International Conference on Humanoid Robots, pp. Sensors 18(7), 2194 (2018)īarros, P., Parisi, G.I., Jirak, D., Wermter, S.: Real-time gesture recognition using a humanoid robot with a deep neural architecture. IEEE (2016)īachmann, D., Weichert, F., Rinkenauer, G.: Review of three-dimensional human-computer interaction with focus on the leap motion controller. In: 2016 7th International Conference on Sciences of Electronics, Technologies of Information and Telecommunications (SETIT), pp. 89, 35–49 (2019)Īmeur, S., Khalifa, A.B., Bouhlel, M.S.: A comprehensive leap motion database for hand gesture recognition. Sensors 18(9), 2834 (2018)Īhmad, A., Migniot, C., Dipanda, A.: Hand pose estimation and tracking in real and virtual interaction: a review.

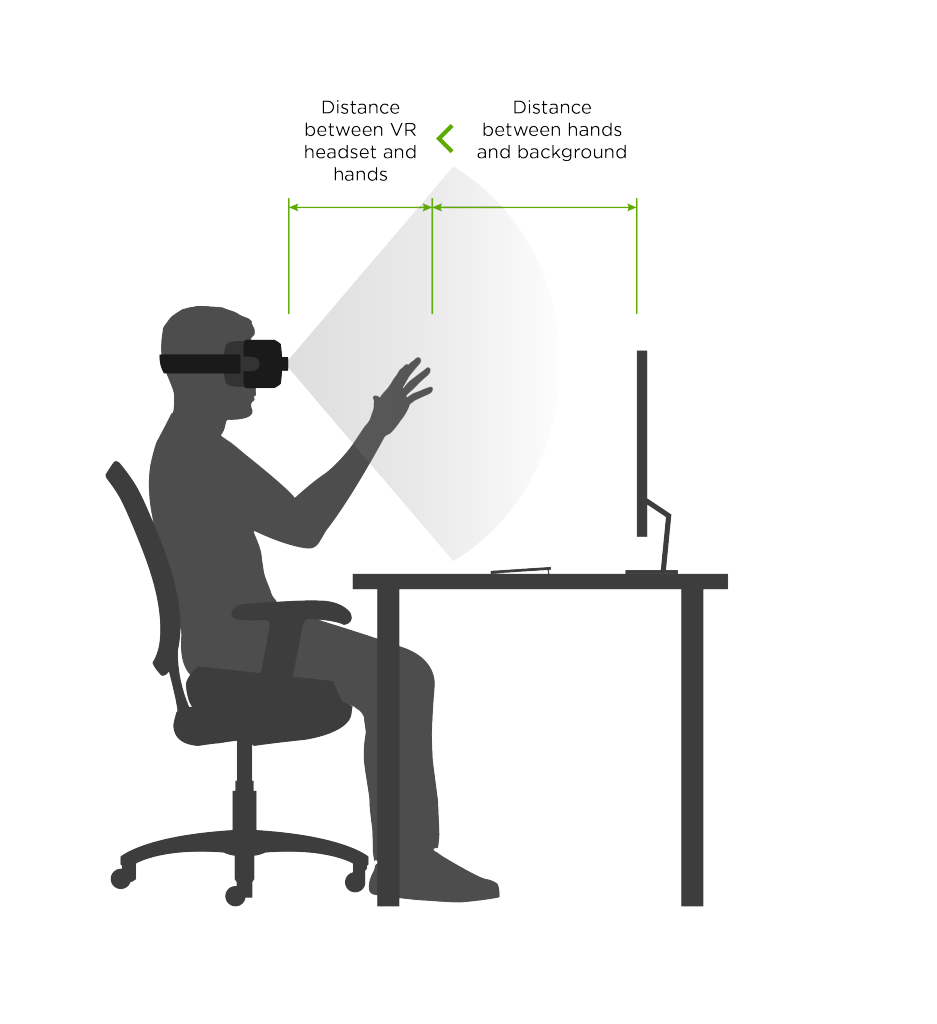

KeywordsĪbraham, L., Urru, A., Normani, N., Wilk, M., Walsh, M., O’Flynn, B.: Hand tracking and gesture recognition using lensless smart sensors. The method has been successfully applied to the existing reference dataset and preliminary tests have already been performed for the real-time recognition of dynamic gestures performed by users. A modified version of the popular ResNet-50 architecture is adopted, obtained by removing the last fully connected layer and adding a new layer with as many neurons as the considered gesture classes. The classification of the gestures is performed using a deep Convolutional Neural Network (CNN). The acquired gesture information is converted in color images, where the variation of hand joint positions during the gesture are projected on a plane and temporal information is represented with color intensity of the projected points. In this paper, we present a method for the recognition of a set of non-static gestures acquired through the Leap Motion sensor. Defining methods for the automatic understanding of gestures is of paramount importance in many application contexts and in Virtual Reality applications for creating more natural and easy-to-use human-computer interaction methods.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed